ResearchHome / Research / Photography / Coffee I'm interested in developing spatially intelligent systems—combining generative modeling and neural rendering to reconstruct and synthesize the visual world. My interests lie in 3D generation and neural rendering, at the intersection of computer vision, computer graphics, and robotics. |

Research R |

|

WorldFlow3D: Flowing Through 3D Distributions for Unbounded World GenerationAmogh Joshi*, Julian Ost*, Felix Heide Preprint, 2026 project page / arXiv We present WorldFlow3D, a novel approach for generating unbounded 3D worlds via latent-free sequential flow matching through 3D data distributions. |

|

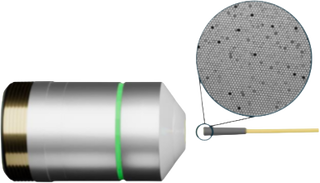

Seeing Through Fibers: Unsupervised Image Reconstruction in Fiber Bundle Imaging SystemsAmir Reza Vazifeh, Congli Wang, Amogh Joshi, Ilya Chugunov, Jipeng Sun, Jiwoon Yeom, Jason W. Fleischer, José S. Pulido, Felix Heide Optics Express, 2026 project page / publication We introduce an unsupervised method that reconstructs high-resolution fiber bundle images from misaligned bursts without calibration or paired data. |

|

LSD-3D: Large-Scale 3D Driving Scene Generation with Geometry GroundingJulian Ost*, Andrea Ramazzina*, Amogh Joshi*, Maximilian Bömer, Mario Bijelic, Felix Heide AAAI, 2026 project page / publication / arXiv We present LSD-3D, a method for generating 3D driving scenes with coherent 3D geometry and photorealistic, high-fidelity texture. |

|

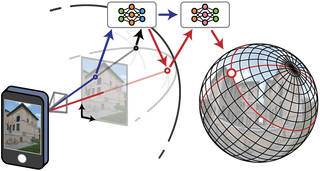

Neural Light Spheres for Implicit Image Stitching and View SynthesisIlya Chugunov, Amogh Joshi, Kiran Murthy, Francois Bleibel, Felix Heide SIGGRAPH Asia, 2024 project page / publication / arXiv We design a spherical neural light field model for implicit panoramic image stitching and re-rendering, capable of handling depth parallax, view-dependent lighting, and scene motion. |

Other Work 6I've always been deeply interested in the analysis and production of data, of any sort. I've worked heavily in agrobotics and agricultural machine learning, to scale real data and infrastructure, improve efficiency, and produce synthetic crop data. I've also spent time trying to understand the learning process of VLMs, and correlate patterns in information sharing with ideological insight in social science. See this for more details. |

|

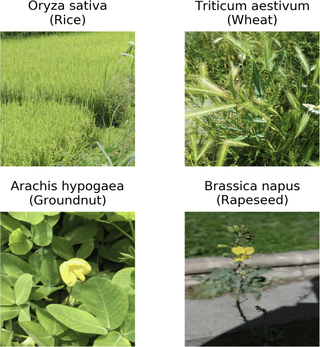

iNatAg: Multi-Class Classification Models Enabled by a Large-Scale Benchmark Dataset with 4.7M Images of 2,959 Crop and Weed SpeciesNaitik Jain, Amogh Joshi, Mason Earles CVPR Vision for Agriculture, 2025 publication / arXiv We introduce iNatAg, a 4.7M-image dataset of 2,959 crop and weed species - one of the world's largest for agriculture - and benchmark models achieving state-of-the-art classification performance. |

|

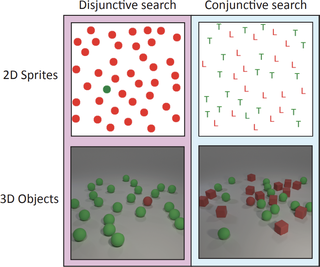

Understanding the Limits of Vision Language Models Through the Lens of the Binding ProblemDeclan Campbell, Sunayana Rane, Tyler Giallanza, Nicolò De Sabbata, Kia Ghods, Amogh Joshi, Alexander Ku, Steven M. Frankland, Thomas L. Griffiths, Jonathan D. Cohen, Taylor W. Webb NeurIPS, 2024 publication / arXiv We identify that state-of-the-art VLMs fail at basic multi-object reasoning due to the binding problem, which limits simultaneous entity representation - similar to human brain processing. |

|

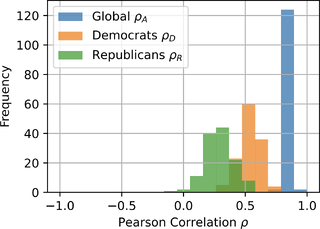

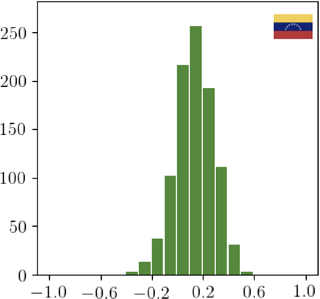

Examining Similar and Ideologically Correlated Imagery in Online Political CommunicationAmogh Joshi, Cody Buntain ICWSM, 2024 publication / arXiv We investigate how US national politicians' use of various visual media on Twitter reflects their political positions, identifying limitations in standard image characterization methods. |

|

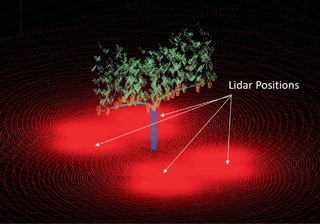

An Open Source Simulation Toolbox for Annotation of Images and Point Clouds in Agricultural ScenariosDario Guevara, Amogh Joshi, Pranav Raja, Elisabeth Forrestel, Brian Bailey, Mason Earles ISVC, 2023 publication We present an open-source simulation toolbox designed for the easy generation of synthetic labeled data for both RGB imagery and point cloud information, applicable to a wide array of cultivars. |

|

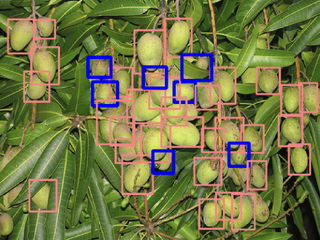

Standardizing and Centralizing Datasets for Efficient Training of Agricultural Deep Learning ModelsAmogh Joshi, Dario Guevara, Mason Earles Plant Phenomics, 2023 publication / arXiv We present methods for enhancing data efficiency in agricultural computer vision, which improves performance and reduces training time, and introduce a novel set of model benchmarks. |

|

Exploiting the Right: Inferring Ideological Alignment in Online Influence Campaigns Using Shared ImagesAmogh Joshi, Cody Buntain ICWSM PhoMemes, 2022 publication / arXiv / press We develop models to analyze the ideological presentation of foreign Twitter accounts based on shared images, revealing inconsistencies in ideological positions across different content types. |

Projects 1 2The following are major projects I've been involved in the development of. |

|

AgML: An Open-Source Library for Agricultural Machine LearningAI Institute for Next Generation Food Systems project / info Since its inception, I have led the development of AgML. We have aggregated the world's largest collection of agricultural deep learning datasets, produced benchmarks and pretrained weights for state-of-the-art models, and developed a suite of tools for data preprocessing, model training, and deployment in an easy-to-use API. |

|

© Amogh Joshi, 2025. |